Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar

B.1 The R Software. R FUNCTIONS IN SCRIPT FILE agree.coeff2.r If your analysis is limited to two raters, then you may organize y

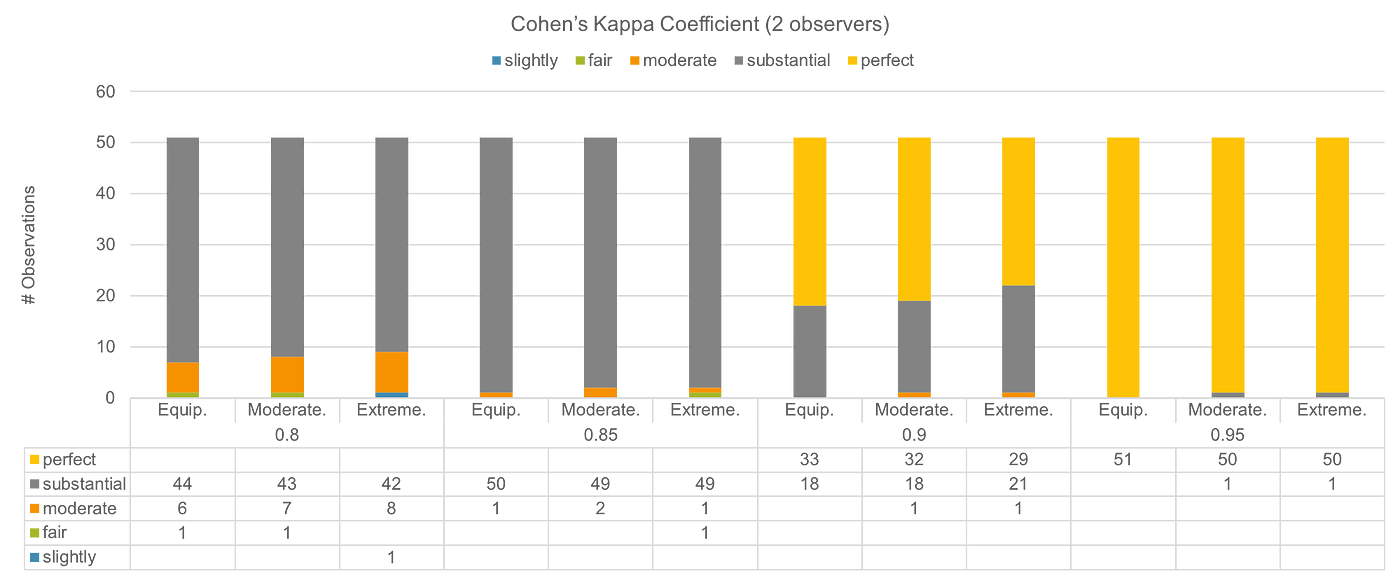

Correlation Kappa Coefficient of the categorical data and the p value... | Download Scientific Diagram

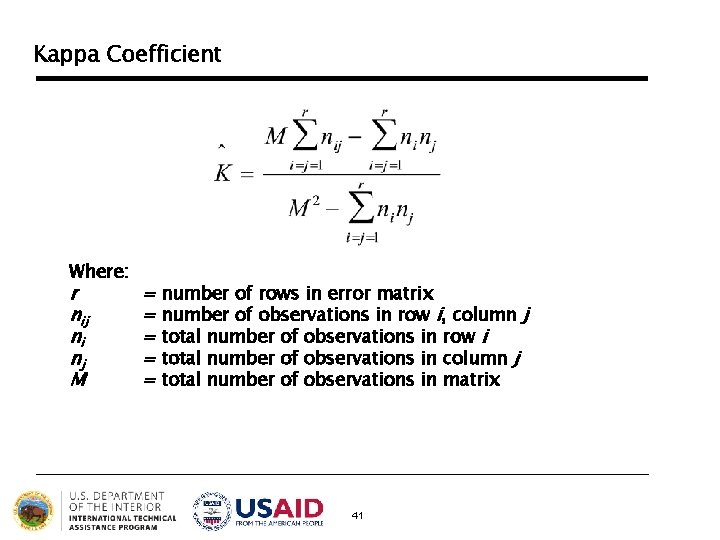

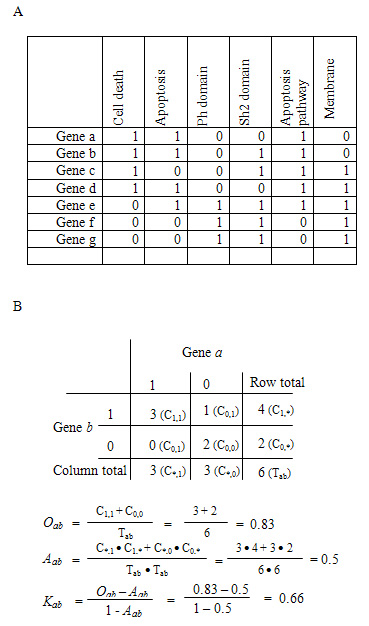

180-30: Calculation of the Kappa Statistic for Inter-Rater Reliability: The Case Where Raters Can Select Multiple Responses from

![Solved 2. Cohens Kappa [R] Two pathologist diagnose | Chegg.com Solved 2. Cohens Kappa [R] Two pathologist diagnose | Chegg.com](https://media.cheggcdn.com/study/c7a/c7ae507f-4041-44ca-b57e-39704c37ba0d/image)